By: Joe Salesky, Head of AI Research

The Transformer Transformed

The transformer architecture changed how AI processes information. Now AI is changing how data is processed. Every inference call is an act of data transformation: unstructured input goes in, structured and actionable output comes out.

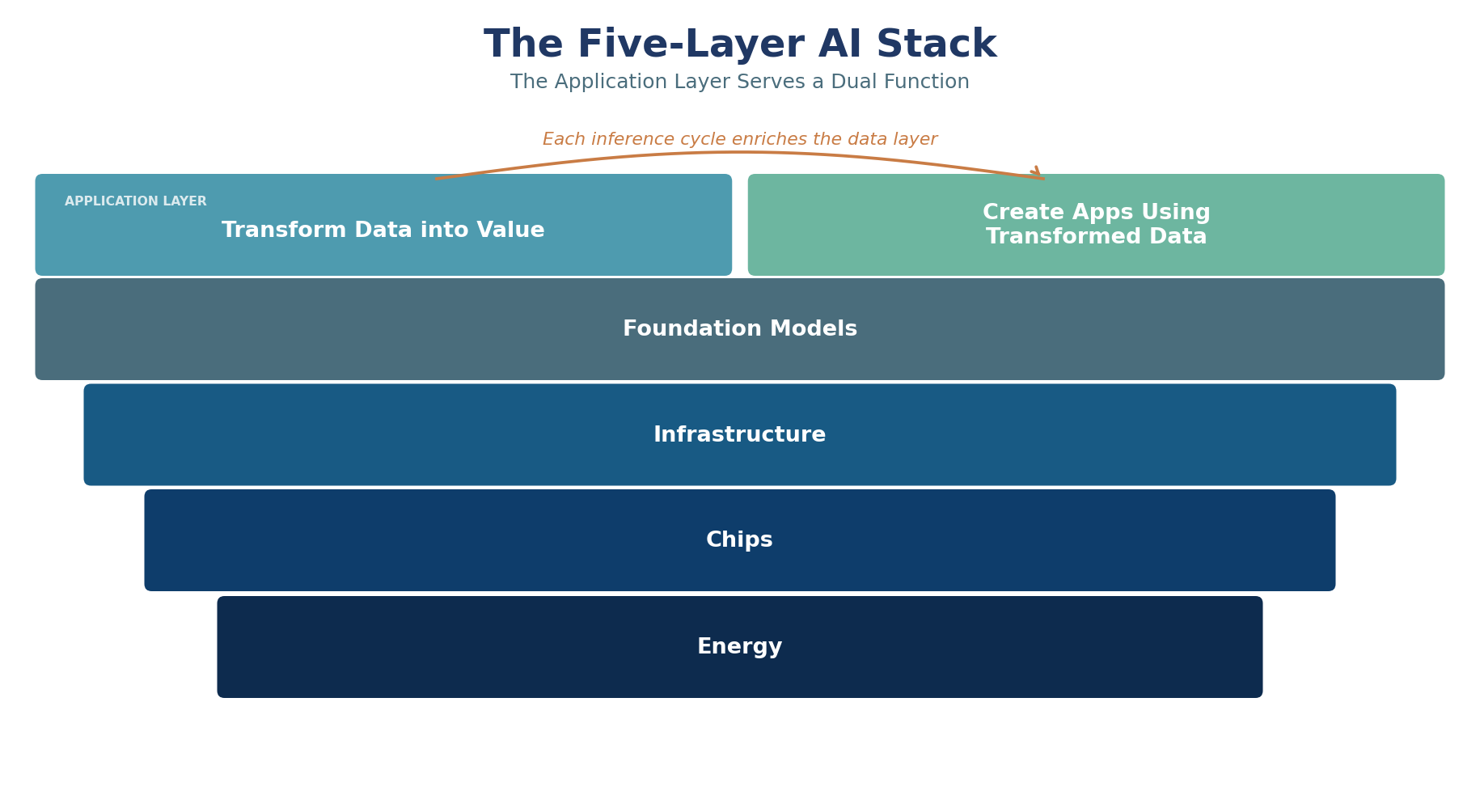

Jensen Huang framed the AI buildout as a five-layer stack at Davos in January 2026: energy, chips, infrastructure, foundation models, and applications. Each layer serves the one above it. Hundreds of billions invested, trillions still required. https://blogs.nvidia.com/blog/ai-5-layer-cake/

The question is whether value creation at the application layer justifies the capital deployed beneath it. Our reading is that the application layer serves a dual function. It transforms data into value and creates applications that use transformed data.

The tools themselves accelerate this cycle. AI loads information into AI-accessible repositories, builds data dictionaries that facilitate contextual understanding, and creates metadata that speeds retrieval while reducing context consumption. Databases become regeneratively improved resources. Each query refines the schema. Each insight tightens the data dictionary. Each workflow creates reusable context. The database becomes a collaborator, not a repository.

Our survey of 212,382 U.S. respondents between March 2025 and March 2026 tracks where this transformation is working and where it is stalling.

The Integration Gap

Among the 48,330 work users in our survey, the number one feature that would motivate an upgrade from free to paid AI is integration with apps, cited by 22.7%. Improved generative quality comes second at 19.2%.

The satisfaction data tells the same story. Integration satisfaction among work users scores an NPS of negative 10.1, the lowest of any dimension we measure for this group. Overall satisfaction scores positive 9.1. Productivity satisfaction scores positive 5.9. Work users find AI productive. They do not find it connected.

AI is solving its own integration problem. The early phase required users to carry data between tools manually. The current trajectory is direct connectivity: connectors, APIs, and open standards like Model Context Protocol are giving AI live access to databases, CRMs, email, calendars, and document repositories. AI enriches the data it touches, builds context from usage patterns, and makes each subsequent query against that data more productive. The integration gap is closing because AI is the mechanism that closes it.

Huang’s five-layer stack is the right framework. Our data adds a dimension to it: the application layer is not just consuming infrastructure, it is improving the data that feeds back into the stack. The integration gap measures how much of that feedback loop remains to be connected. As connectivity improves, the return on every layer beneath it compounds.

The Transformation Premium

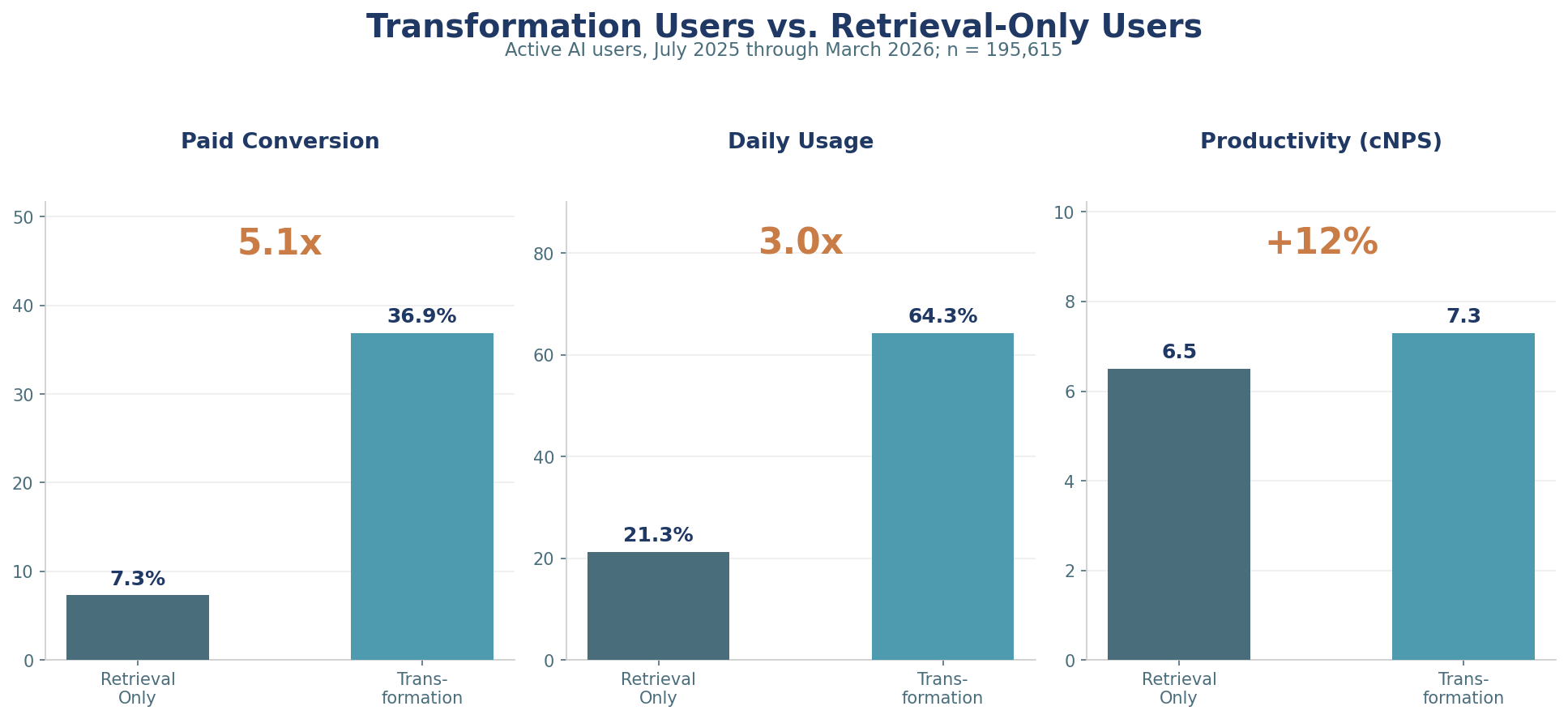

Users start with retrieval: web search, writing assistance, topical research. These require no user data. As users build fluency, they move toward transformation: data analysis, software coding, workflow automation. These require enterprise data. The economic difference is substantial.

Users who employ at least one transformation use case convert to paid subscriptions at 31.6%. Retrieval-only users convert at 13.3%. That is a 2.4x differential across 113,121 respondents. Software coding users convert at 40.2%. Automation users at 37.0%. Data analysis at 31.3%. The baseline for web search is 16.6%. Every metric moves in the same direction: users who connect their data to AI pay for it.

Users who employ at least one transformation use case convert to paid subscriptions at 31.6%. Retrieval-only users convert at 13.3%. That is a 2.4x differential across 113,121 respondents. Software coding users convert at 40.2%. Automation users at 37.0%. Data analysis at 31.3%. The baseline for web search is 16.6%. Every metric moves in the same direction: users who connect their data to AI pay for it.

Among workers using AI, 64.9% save three or more hours per week. The value is not the hours saved. It is what those hours produce. Workers with paid AI tools perform analysis they could not previously perform, on data they could not previously access, at speeds that make the work economically viable for the first time. This is expanded output, not cost savings.

Implications for the Stack

The return on AI infrastructure investment is not measured in model benchmarks. It is measured in the volume and value of data transformations the economy can now perform. Cost savings create one-time value capture. Expanded output creates recurring value, drives additional inference demand, and justifies additional infrastructure investment. The flywheel is real. But it runs on data connectivity, not model capability.

The 56.3% of active users who increased their AI usage in the prior three months are climbing the transformation curve. The 19.7% of work users who say they would never pay represent users who have not yet encountered a use case that touches their own data, or whose employers restrict AI integration with enterprise systems. Both conditions are temporary.

The embedded AI thesis assumed that placing AI inside productivity tools would solve the integration problem. Our data from the Recon Analytics User Illusion report suggests otherwise: Microsoft Copilot lost 7.3 percentage points of paid subscriber share in seven months despite being embedded in the most widely deployed productivity suite in the world. Embedding AI inside one application does not help when the work crosses applications. The integration gap is not between the user and AI. It is between the data sources AI needs to access.

Standards like Model Context Protocol address this directly. Rather than embedding AI inside every application, MCP allows AI to reach across applications from a single interface, connecting to databases, CRMs, email, calendars, and document repositories simultaneously. The platforms that build trusted connectors to enterprise data will capture the transformation premium. The platforms that confine AI to a single application will continue to lose share to those that do not.

The regenerative data layer is what makes the infrastructure investment compound. Unlike previous technology cycles where hardware depreciated and software required constant replacement, AI-enriched data environments improve with use. The data dictionary grows. The context layer deepens. The retrieval cost per query declines. Every dollar of infrastructure investment seeds a data asset that becomes more valuable over time.

The trillion-dollar question behind Huang’s stack is whether the applications at the top justify the infrastructure at the bottom. Our data says they do, but only when AI can reach the data. The 22.7% of work users asking for integration are telling the industry what comes next. The capex has been deployed. The models work. The data is there. The returns now depend on connecting them.

Methodology: Based on the Recon Analytics U.S. AI Survey, 212,382 business and consumer respondents surveyed between March 14, 2025 and March 6, 2026. Upgrade trigger analysis based on 48,330 work users and 57,863 home-only users. Paid conversion analysis based on 113,121 respondents with free or paid status recorded. Productivity NPS uses standard NPS methodology (promoters minus detractors on a 0–10 scale). Integration NPS based on 43,718 work users with satisfaction scores. Willingness to pay based on 21,915 work users who named a price. Note: The AI100045 use case battery was expanded in Q3 2025, creating a discontinuity in adoption rates for that question series; use case adoption rates cited in this paper are drawn from the AI100020 series, which remained consistent across the full survey period. All percentages unweighted. Recon Analytics is an independent research firm. This analysis was not commissioned or funded by any AI platform vendor, chip manufacturer, or cloud infrastructure provider.